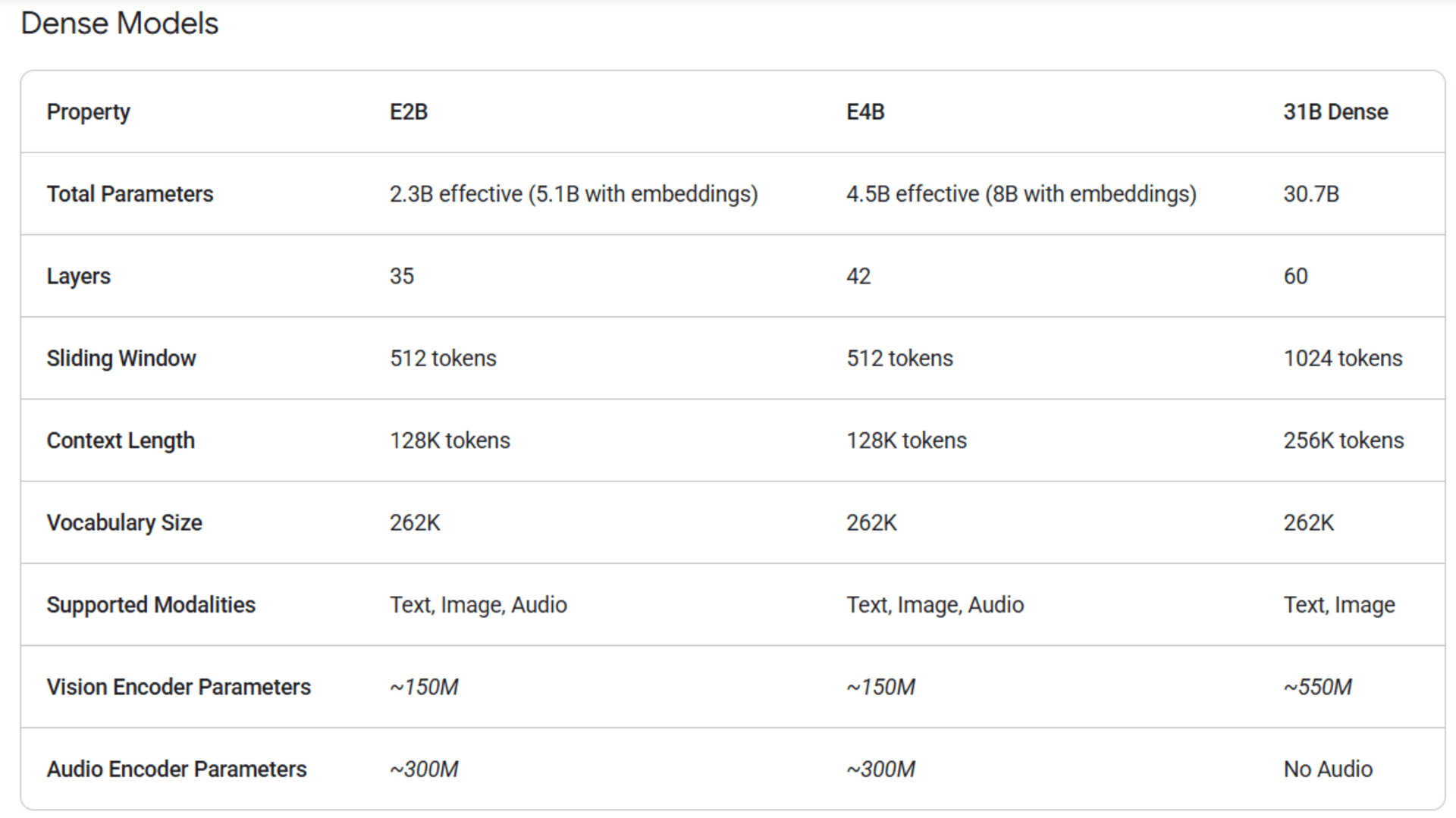

4 model sizes:

E2B / E4B - Edge models, run on-device (a Raspberry Pi or your phone, <1.5GB RAM)

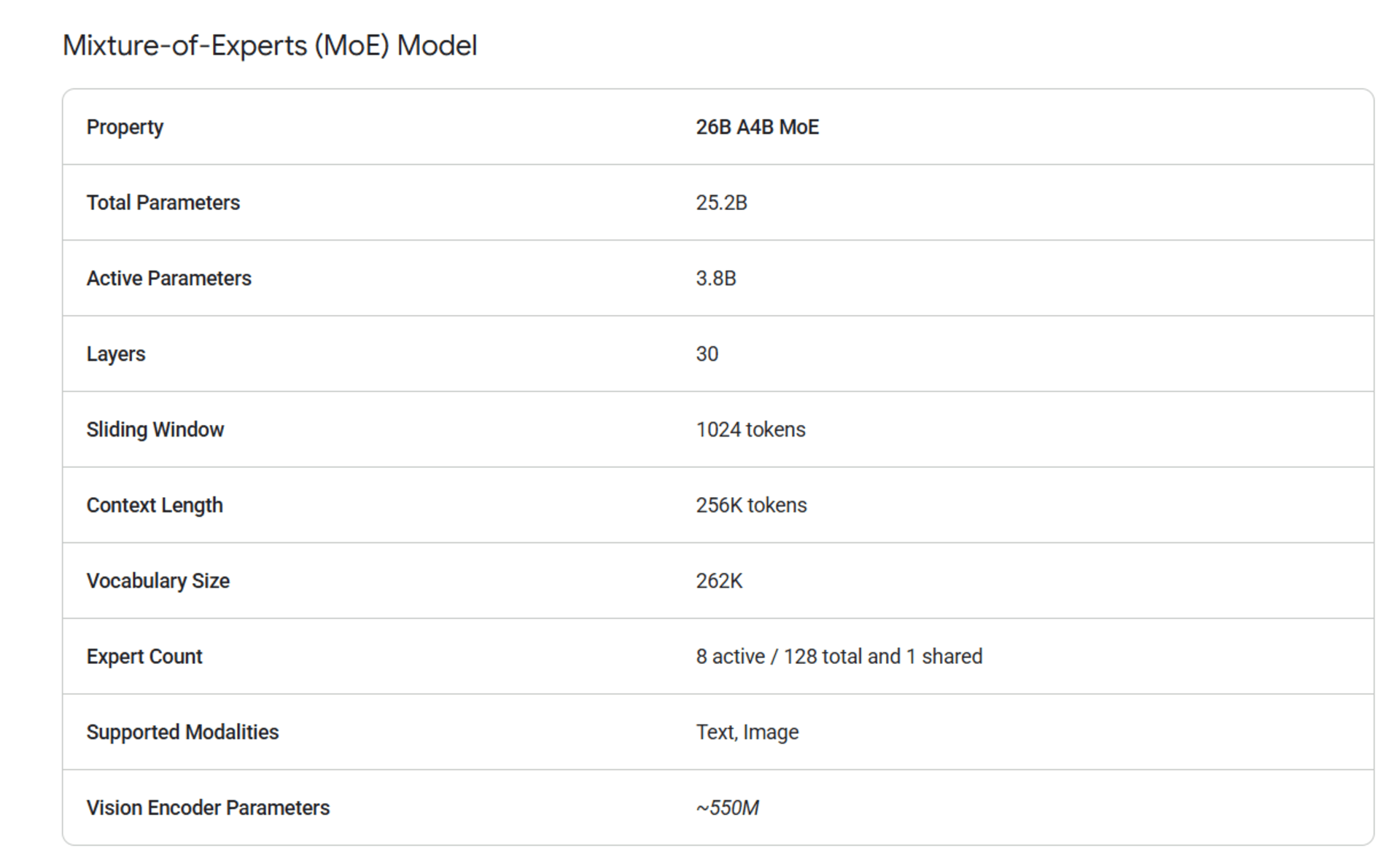

26B A4B MoE - only 3.8B params active at inference

31B Dense

Specs from the model card[1]

Gemma 4 benchmarks (31B unless noted)

Reasoning

• MMLU Pro (massive multitask language understanding): 85.2%

• GPQA Diamond (graduate-level science Q&A): 84.3%

• AIME 2026 (American math olympiad): 89.2%

• MMMLU (multilingual MMLU, 140+ languages): 88.4%

Coding

• LiveCodeBench v6 (real-world coding problems): 80.0%

• Codeforces ELO (competitive programming rating): 2150

Vision

• MMMU Pro (college-level multimodal reasoning): 76.9%

• MATH-Vision (math problems with visual context): 85.6%

Audio (E2B /E4B only)

• FLEURS WER: 0.08

• CoVoST (speech-to-text translation): 35.54

When to use which

Scenario | Best choice |

|---|---|

Cloud, quality is the priority | 31B Dense |

Cloud, you're serving millions of requests and cost matters | 26B MoE (only 3.8B params active at inference — cheaper per token) |

On-device / mobile / edge | E2B or E4B |

Audio transcription | E2B or E4B (only these two support audio) |

All these models are released under the Apache 2.0 license.